This article analyzes typical deep learning methods in detail according to their structural characteristics, and lists existing lipreading databases, including their detailed information and the methods applied to these databases. The advantage of deep learning methods is that they can learn the best features from large databases. In recent years, traditional lipreading methods have been gradually replaced by deep learning methods. Traditional feature extraction methods focus on handmade features, which are, however, not very reliable under unconstrained conditions.

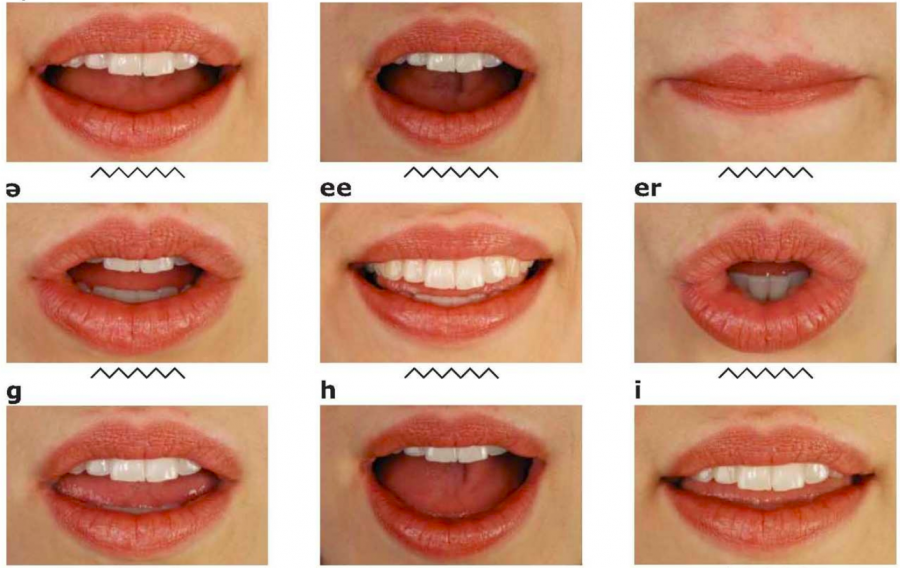

Traditional lipreading methods are mainly discussed from three aspects: lip detection and extraction, lip feature extraction, and classification. This article summarizes the main research from traditional methods to deep learning methods on lipreading. Therefore, lipreading can detect the speaker’s content in a noisy environment, even without a voice signal. Lipreading is a visual speech recognition technology that recognizes the speech content based on the motion characteristics of the speaker’s lips without speech signals. AUTOMATED LIP READING SOFTWARE HOW TO82.84%) is achieved by leveraging the voting model.Īlthough automatic speech recognition (ASR) technology is mature, there are still some unsolved problems, such as how to accurately identify what the speaker is saying in a noisy environment.

Moreover, a voting model that combines the three architectures is proposed. The dataset contains 1051 videos and will be made available upon request. During the implementation stage of the proposed system, three deep learning and neural network architectures are alternatively used to train, validate, and test the system using a locally collected and preprocessed dataset. The system receives a video of a person uttering an Arabic word as an input and outputs the text of the predicted word. In this paper, we propose a lipreading computing system capable of recognizing ten common Arabic words by performing word extraction from the mouth movements. Lipreading has two main advantages: facilitate communication for people with hearing or speaking problems and aid speech recognition in noisy environments. This process is also known as Visual Speech Recognition (VSR). Lipreading is the ability to recognize words or sentences from the mouth movements of a speaking person. Compared with the state-of-the-art approaches in lip reading sentences the proposed system has demonstrated an improved performance by a 10% lower word error rate on average under varying illumination ratios.

The performance of the proposed system has been testified with the BBC Lip Reading Sentences 2 (LRS2) benchmark dataset. For the language model, a Recurrent Neural Network is used. Transformers utilize multi-headed attention for the phoneme recognition models. In this presented work, the visual front-end model of the system consists of a Spatial-Temporal (3D) convolution followed by a 2D ResNet. Different classification schemas have been investigated, including character-based and visemes-based schemas. This paper attempts to use phonemes as a classification schema for lip-reading sentences to explore an alternative schema and to enhance systems performance. Recent research in this area has shifted from simple word recognition to lip-reading sentences in the wild.

Lip-reading is a process of interpreting speech by visually analyzing lip movements.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed